Innovation is the primary driver of GDP growth. If we want to understand how most new wealth is created – and (perhaps) if we want to find inspiration for our own tinkering– we should study history. Especially economic history, the history of science, and the history of technology.

A new book,The One Device: The Secret History of the iPhone (New York: 2017, Little, Brown and Company), is a fascinating tale by Brian Merchant.

I’ve summarized each chapter (except for one):

- Introduction

- Exploring New Rich Interactions (ENRI)

- A Smarter Phone

- Minephones

- Scratchproof

- Multitouched

- Prototyping

- Lion Batteries

- Image Stabilization

- Sensing Motion

- Strong-ARMed

- Enter the iPhone

- Hey, Siri

- Designed in California, Made in China

- Sellphone

- The Black Market

- The One Device

(Photo byPavel Å evela, Wikimedia Commons)

INTRODUCTION

The iPhone is the bestselling product of all time:

In 2016, Horace Dediu, a technology-industry analyst and Apple expert, listed some of the bestselling products in various categories. The top car brand, the Toyota Corolla: 43 million units. The bestselling game console, the Sony PlayStation: 382 million. The number-one book series, Harry Potter: 450 million books. The iPhone: 1 billion. That’s nine zeroes. “The iPhone is not only the bestselling mobile phone but also the bestselling music player, the best selling camera, the bestselling video screen and the bestselling computer of all time,” he concluded. “It is, quite simply, the bestselling product of all time.”

Merchant cites a study by Nielsen that found that Americans spend an average of 11 hours a day in front of a screen. About 4.7 of those hours are in front of a phone. A study by British psychologists discovered that people probably use their phones twice as often as they think they do.

(Photo by Olena Golubova)

Two-thirds of Apple’s revenues come from the iPhone. People read news, engage in social media, use Google maps, send and receive messages, check email, employ calendars and workflows, and take pictures. Merchant:

The iPhone isn’t just a tool; it’s the foundational instrument of modern life.

But the invention of the iPhone – like many inventions – was a culmination of a long series of inventions.

The iPhone intertwines a phenomenal number of prior inventions and insights, some that stretch back into antiquity. It may, in fact, be our most potent symbol of just how deeply interconnected the engines that drive modern technological advancement have become.

Merchant again:

The iPhone is a deeply, almost incomprehensively, collective achievement… It’s a container ship of inventions, many of which are incompletely understood. Multitouch, for instance, granted the iPhone its interactive magic, enabling swiping, pinching, and zooming. And while Jobs publicly claimed the invention as Apple’s own, multitouch was developed decades earlier by a trail of pioneers by places as varied as CERN’s particle-accelerator labs to the University of Toronto to a start-up bent on empowering the disabled. Institutions like Bell Labs and CERN incubated research and experimentation; governments poured in hundreds of millions of dollars to support them.

Moreover, the mining of the raw materials used in the iPhone, and the factory labor that goes into mass-producing iPhones, are also central to the story. The result, writes Merchant, is what J.C.R. Licklider calledman-computer symbiosis:

A coexistence with an omnipresent digital reference tool and entertainment source, an augmenter of our thoughts and enabler of our impulses.

Although Apple’s policy of secrecy made it difficult for Merchant to interview insiders, he still managed to speak with dozens of people, including iPhone designers, engineers, and executives.

EXPLORING NEW RICH INTERACTIONS (ENRI)

(Photo by Peshkova)

A small group– a few young software designers, an industrial engineer, and some input engineers– started meeting to invent new ways of interfacing with machines. Their mission: “Explore new rich interactions.” Merchant refers to this group as ENRI.

The team was experimenting with every stripe of bleeding-edge hardware– motion sensors, new kinds of mice, a burgeoning technology known as multitouch– in a quest to uncover a more direct way to manipulate information. The meetings were so discreet that not even Jobs knew they were taking place. The gestures, user controls, and design tendencies stitched together here would become the cybernetic vernacular of the new century– because the kernel of this clandestine collaboration would become the iPhone.

Two key engineers in the Human Interface group – also called the UI (User Interface) group – were Bas Ording, a Dutch software designer, and Imran Chaudhri, a British designer. Greg Christie, who’d come to Apply earlier to work on Newton, ended up in charge of the Human Interface group after the Newton failed to sell well.

Civil engineer Brian Huppi had gone back to school to study mechanical engineering after reading a book about Apple, Steven Levy’sInsanely Great. The book tells the story of how Jobs separated key Apple players, put a pirate flag above their department, and pushed them to create the pioneering Macintosh.

Huppi got a job at Apple as in input engineer in 1998. He got to know the Industrial Design (ID) group, headed by Jonathan Ive. When he grew bored interating laptop hardware, Huppi spoke with Duncan Kerr, who’d worked at the well-known design firm IDEO before coming to Apple. After Huppi and Kerr talked about innovations to the user experience, Kerr asked Jony Ive if they could form a small group to work on the topic. Ive liked the idea.

Huppi and Kerr started working with Christie, Ording, and Chaudhri. And they were joined by Josh Strickon, who came from MIT’s Media Lab. Strickon’s master’s thesis involved the development of a laser range finder for hand-tracking that could sense multiple fingers. The ENRI group met weekly in a conference room with their laptops. They took extensive notes, put drawings on whiteboards, and gave presentations to one another.

There were a lot of ideas. Some feasible, some boring, some outlandish and boreline sci-fi– some of those, Huppi says, he “probably can’t talk about,” because fifteen years later, they had yet to be developed, and “Apple still might want to do them someday.”

“We were looking at all sorts of stuff,” Strickon says, “from camera-tracking and multitouch and new kinds of mice.” They studied depth-sensing time-of-flight cameras like the sort that would come to be used in the Xbox Kinect. They explored force-feedback controls that would allow users to interact directly with virtual objects with the touch of their hands.

In many ways, the group was testing the limits of the old mouse-and-keyboard interface with the computer. Could there be an easier way to zoom, or to scroll and pan? Why couldn’t the user just tap, tap, tap on the screen for certain repetitive acts?

Tina Huang, an Apple engineer, had been experiencing wrist problems. One day, she showed up to work with trackpad made by FingerWorks, a small company in Delaware. It allowed her to use fluid hand movements to communicate complex commands to her Mac. The technology was calledmultitouch finger tracking.

(Image by Willtron, Wikimedia Commons)

FingerWorks was founded by a bright PhD student, Wayne Westerman, and his dissertation advisor.

Resistive touch works by having two layers. When you push the outer layer, the inner layer registers the touch. But the resistive touchscreen is frequently inexact and glitchy. Capacitive touch, by contrast, works when the electricity in a human finger distorts the electrostatic field on the screen. Merchant:

A new, hands-on approach to computing, free of rodent intermediaries and ancient keyboards, started to seem like the right path to follow, and the ENRI team warmed to the idea of building a new user interface around the finger-based language of multitouch pioneered by Westerman– even if they had to rewrite or simplify the vocabulary. “It kept coming up– we want to be able to move things on the screen like a piece of paper on the table,” Chaudhri says.

The ENRI group worked very hard. But they barely noticed the long hours because they were exhilarated. They could sense the potential importance of new technologies like multitouch.

A SMARTER PHONE

In 1994, Frank Canova helped IBM invent a smartphone – the Simon Personal Communicator – that had most of the core functions of an iPhone. But the Simon was a box that size of a brick. The iPhone, coming over a decade later, was far more powerful. And it was thin and easy to use. The Simon was too far ahead of its time.

(Photo by Bcos47, Wikimedia Commons)

Merchant quotes history of technology scholar Carolyn Marvin:

In a historical sense, a computer is no more than an instantaneous telegraph with a prodigious memory, and all the communications inventions in between have simply been eleborations on the telegraph’s original work.

In the long transformation that begins with the first application of electricity to communication, the last quarter of the nineteenth century has a special importance. Five proto-mass media were invented during this period: the telephone, phonograph, electric light, wireless, and cinema.

Merchant sums it up:

The smartphone, like every other breakthrough technology, is built on the sweat, ideas, and inspiration of countless people. Technological progress is incremental, collective, and deeply rhizomatic, not spontaneous…

The technologies that shape our lives rarely emerge suddenly and out of nowhere; they are part of an incomprehensibly lengthy, tangled, and fluid process brought about by contributors who are mostly invisible to us. It’s a very long road back from the bleeding edge.

MINEPHONES

In the old colonial city of Potos, Bolivia, there is a “rich hill” called Cerro Rico, nicknamed “The Mountain That Eats Men.”

The Mountain That Eats Men bankrolled the Spanish Empire for hundreds of years. In the sixteenth century, some 60 percent of the world’s silver was pulled out of its depths. By the seventeenth century, the mining boom had turned Potos into one of the biggest cities in the world; 160,000 people– local natives, African slaves, and Spanish settlers– lived here, making the industrial hub larger than London at the time. More would come, and the mountain would swallow many of them. Between four and eight million people are believed to have perished there from cave-ins, silicosis, freezing, or starvation.

(Photo of Cerro Rico by Mhwater, Wikimedia Commons)

Today fifteen thousand miners– many of them children as young as six years old– continue to work the mines for tin, lead, zinc, and a bit of silver. Merchant comments:

…metal mined by men and children wielding the most primitive of tools in one of the world’s largest and oldest continuously running mines– the same mine that bankrolled the sixteenth century’s richest empire– winds up inside one of today’s most cutting-edge devices. Which bankrolls one of the world’s richest companies.

Merchant asked a mining consultant to analyze the chemical composition of the iPhone. Results:

| Element | Percent of iPhone by weight | Grams used in iPhone | Average cost per gram | Value of element in iPhone |

| Aluminum | 24.14 | 31.14 | $0.0018 | $0.055 |

| Arsenic | 0.00 | 0.01 | $0.0022 | – |

| Gold | 0.01 | 0.014 | $40 | $0.56 |

| Bismuth | 0.02 | 0.02 | $0.0110 | $0.0002 |

| Carbon | 15.39 | 19.85 | $0.0022 | – |

| Calcium | 0.34 | 0.44 | $0.0044 | $0.002 |

| Chlorine | 0.01 | 0.01 | $0.0011 | – |

| Cobalt | 5.11 | 6.59 | $0.0396 | $0.261 |

| Chrome | 3.83 | 4.94 | $0.0020 | $0.010 |

| Copper | 6.08 | 7.84 | $0.0059 | $0.047 |

| Iron | 14.44 | 18.63 | $0.0001 | $0.002 |

| Gallium | 0.01 | 0.01 | $0.3304 | $0.003 |

| Hydrogen | 4.28 | 5.52 | – | – |

| Potassium | 0.25 | 0.33 | $0.0003 | – |

| Lithium | 0.67 | 0.87 | $0.0198 | $0.017 |

| Magnesium | 0.51 | 0.65 | $0.0099 | $0.006 |

| Manganese | 0.23 | 0.29 | $0.0077 | $0.002 |

| Molybdenum | 0.02 | 0.02 | $0.0176 | $0.000 |

| Nickel | 2.10 | 2.72 | $0.0099 | $0.027 |

| Oxygen | 14.50 | 18.71 | – | – |

| Phosphorus | 0.03 | 0.03 | $0.0001 | – |

| Lead | 0.03 | 0.04 | $0.0020 | – |

| Sulfur | 0.34 | 0.44 | $0.0001 | – |

| Silicon | 6.31 | 8.14 | $0.0001 | $0.001 |

| Tin | 0.51 | 0.66 | $0.0198 | $0.013 |

| Tantalum | 0.02 | 0.02 | $0.1322 | $0.003 |

| Titanium | 0.23 | 0.30 | $0.0198 | $0.006 |

| Tungsten | 0.02 | 0.02 | $0.2203 | $0.004 |

| Vanadium | 0.03 | 0.04 | $0.0991 | $0.004 |

| Zinc | 0.54 | 0.69 | $0.0028 | $0.002 |

The iPhone is 24 percent aluminum, the most abundant metal on earth. Aluminum is very light and cheap. It comes from bauxite, which is often strip-mined. It takes four tons of bauxite to make one ton of aluminum.

The iPhone is 3 percent cobalt. Most of the cobalt is in the lithium-ion battery and is mined in the Democratic Republic of Congo. The mines there are almost completely unregulated. Workers, including children, toil around the clock. Deaths and injuries are common.

Oxygen, hydrogen, and carbon in the iPhone are associated with different alloys. Indium tin oxide functions as a conductor for the touchscreen. Aluminum oxides are in the casing. Silicon oxides are found in the microchip. (Small amounts of arsenic and gallium are also in the microchip.)

Silicon makes up 6 percent of the phone.

Merchant discovered that 34 kilograms (75 pounds) of ore would have to be mined to have the materials for one 129-gram iPhone.

A billion iPhones had been sold by 2016, which translates into 34 billion kilos (37 million tons) of mined rock. That’s a lot of moved earth– and it leaves a mark. Each ton of ore processed for metal extraction requires around three tons of water. This means that each iPhone “polluted” around 100 liters (or 26 gallons) of water… Producing 1 billion iPhones has fouled 100 billion liters (or 26 billion gallons) of water.

SCRATCHPROOF

In the early 1950s, Don Stookey, an inventor for Corning, discovered a form of glass that didn’t break. He was experimenting and accidentally heated lithium silicate to 900 degrees Celcius instead of 600. The silicate changed into an off-white substance which didn’t break when it fell on the floor.

(Photo of Corningware casserole dishes by Splarka, Wikimedia Commons)

In the early 1960s, Corning kept experimenting with the goal of creating even stronger glass. Eventually they created Chemcor, which is fifteen times stronger than regular glass.

By 1969, 42 million dollars had been invested in Chemcor. Unfortunately, nobody wanted it. Chemcor was too strong for car windshields, for instance. To survive some crashes, the windshield must break. But with Chemcor, the human skull would break against the windshield.

In 2005, Corning started looking as Chemcor again to see if it could be used as strong, affordable, and scratchproof glass in cellphones. So-called Gorilla Glass was invented and is now used in iPhones and other smartphones.

(Illustration by Artsiom Kusmartseu)

MULTITOUCHED

Brent Stumpe, a Danish engineer working at CERN, invented capacitive multitouch in 1970s. Steve Jobs later claimed that Apple invented multitouch, but that’s not very accurate. As with much else in the iPhone, Apple improved the technology and used it in a new way. But Apple didn’t invent it.

Several people, in addition to Stumpe, invented multitouch or a precursor to multitouch. Bill Buxton and his team were working on multitouch at the University of Toronto in 1985. Buxton says that Bob Boie, at Bell Labs, probably came up with the first working multitouch system.

Engineer Eric Arthur Johnson invented a multitouch system for air traffic controllers in 1965.

…We do know what Johnson cited as prior art in his patent, at least: two Otis Elevator patents, one for capacitance-based proximity sensing (the technology that keeps the doors from closing when passengers are in the way) and one for touch-responsive elevator controls. He also named patents from General Electric, IBM, the U.S. military, and American Mach and Foundry. All six were filed in the early to mid-1960s; the idea for touch control was “in the air” even if it wasn’t being used to control computer systems.

Finally, he cites a 1918 patent for a “type-writing telegraph system.” Invented by Frederick Ghio, a young Italian immigrant who lived in Connecticut, it’s basically a typewriter that’s been flattened into a tablet-size grid so each key can be wired into a touch system. It’s like the analog version of your smartphone’s keyboard. It would have allowed for the automatic transmission of messages based on letters, numbers, and inputs– the touch-typing telegraph was basically a pre-proto-Instant Messenger.

William Norris, CEO of the supercomputer firm Control Data Corporation (CDC), fervently believed in touchscreens as the key to digital education. Norris commercialized PLATO– Programmed Logic for Automatic Teaching Operations. By 1964, PLATO had a touchscreen. Light sensors on the four sides of the screen registered wherever a finger touched the screen.

Wayne Westerman, an electrical engineering graduate student at the University of Delaware, invented a form of multitouch in his 1999 PhD dissertation. At last multitouch was poised to go mainstream.

Westerman’s mother had chronic back pain, while Westerman himself developed tendonitis in his wrists. When Westerman finished undergraduate studies at Purdue, he followed Neal Gallagher, a favorite professor, to the University of Delaware.

Westerman’s wrist pain grew worse, which pushed him to seek a solution. He invented a set of gestures to supplant the mouse and keyboard.

Westerman founded FingerWorks in 2001 with his dissertation advisor, Dr. John Elias.

At the beginning of 2005, FingerWorks’ iGesture pad won the Best of Innovation award at CES, the tech industry’s major annual trade show.

Still, at the time, Apple execs weren’t convinced that FingerWorks was worth pursuing– until the ENRI group decided to embrace multitouch.

Merchant comments:

Apple made multitouch flow, but they didn’t create it. And here’s why that matters: Collectives, teams, multiple inventors, build on a shared history. That’s how a core, universally adopted technology emerges…

(Illustration by Onyxprj)

PROTOTYPING

In the summer of 2003, Jony Ive decided the multitouch project was ready to be showed to Steve Jobs. At first, Jobs dismissed it. But then he embraced it. Later, Jobs even claimed that he invented it.

There was still a great deal of work to be done. The project went on lockdown in order to keep it completely secret. At this point, the researchers weren’t thinking about a phone at all.

(Image by BP22Heber, Wikimedia Commons)

The project languished until late 2004, when Steve Jobs announced to the group that Apple was going to make a phone. It would take two years to get Apple’s operating system on to a phone.

Executives would clash; some would quit. Programmers would spend years of their lives coding around the clock to get the iPhone ready to launch, scrambling their social lives, their marriages, and sometimes their health in the process.

LION BATTERIES

Merchant tells of his visit to SQM, or Sociedad Qumica y Minera de Chile – the Chemical and Mining Society of Chile. SQM is the leading producer of potassium nitrate, iodine, and lithium. It’s located in Salar de Atacama in the Atacama Desert, the most arid place on earth. The desert gets half an inch of rainfall per year, and some areas much less.

Chilean miners work this alien environment every day, harvesting lithium from vast evaporating pools of marine brine. That brine is a naturally occurring saltwater solution that’s found here in huge underground reserves. Over the millenia, runoff from the nearby Andes Mountains has carried mineral deposits down to the salt flats, resulting in brines with unusually high lithium concentrations. Lithium is the lightest metal and least dense solid element, and while it’s widely distributed around the world, it never occurs naturally in pure elemental form; it’s too reactive. It has to be separated and refined from compounds, so it’s usually expensive to get. But here, the high concentration of lithium in the salar brines combined with the ultradry climate allows miners to harness good old evaporation to obtain the increasingly precious metal.

(Lithium hydroxide with carbonate growths, Photo by Chemicalinterest, Wikimedia Commons)

Because lithium-ion batteries are essential for smartphones, tablets, laptops, and electric cars, lithium is increasingly referred to as “white petroleum.” Lithium doubled in value in the past couple years based on a jump in projected demand.

While doing postdoc work at Stanford in the early 1970s, chemist Stan Whittingham discovered a way to store lithium ions in sheets of titanium sulfide. This formed the basis for a rechargeable battery.

Whittingham developed the lithium-ion battery while working for Exxon. Hot on the heels of an oil crisis, Exxon had decided that it wanted to be the leading energy company and the leading producer of electric vehicles. But the lithium-ion battery was expensive to produce. And it had flammability issues. Once the oil crisis had passed, Exxon returned to its focus on producing oil.

The recent jumps in projected demand are mostly due to the opening of Tesla’s Gigafactory, which will be the world’s largest lithium-ion-battery factory. The global lithium-ion-battery market is expected to double to $77 billion by 2024, says Transparency Market Research.

(Photo of Tesla’s Gigafactory by Planet Labs, Wikimedia Commons)

IMAGE STABILIZATION

There are obvious similarities for two different mass-market cameras:

- Exhibit A: You Press the Button, We Do the Rest.

- Exhibit B: We’ve taken care of the technology. All you have to do is find something beautiful and tap the shutter button.

Merchant explains:

Exhibit A comes to us from 1888, when George Eastman, the founder of Kodak, thrust his camera into the mainstream with that simple eight-word slogan. Eastman had initially hired an ad agency to market his Kodak box camera but fired them after they returned copy he viewed as needlessly complicated. Extolling the key virtue of his product– that all a consumer had to do was snap the photos and then take the camera into a Kodak shop to get them developed– he launched one of the most famous ad campaigns of the young industry.

Exhibit B is for the iPhone camera. The two ads are similar in their focus on ease of use and in their targeting of the average consumer.

At first, the 2-megapixel camera included on the iPhone wasn’t remarkable. But it wasn’t a priority at that point. By 2016, there were 800 employees dedicated to the camera, an 8-megapixel unit with a Sony sensor, optimal image-stabilization module, and a proprietary image-signal processor.

SENSING MOTION

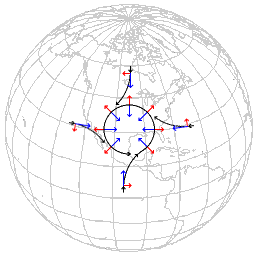

A mass in a rotating system experiences a force perpendicular to the direction of motion and to the axis of rotation. This is theCoriolis effect. The Foucault pendulum in the Paris Observatory slowly changes direction over the course of a day due to this effect.

(Coriolis effect, Wikimedia Commons)

Merchant:

The gyroscope in your phone is a vibrating structure gyroscope (VSG). It is… a gyroscope that uses a vibrating structure to determine the rate at which something is rotating. Here’s how it works: A vibrating object tends to continue vibrating in the same plane if, when, and as its support rotates. So the Coriolis effect– the result of the same force that causes Foucault’s pendulum to rotate to the right in Paris– makes the object exert a force on its own support. By measuring that force, the sensor can determine the rate of rotation.

Another sensor, the accelerometer, measures the acceleration of an object. If an iPhone is sideways, then it accelerates sideways– towards the ground– due to gravity. So the iPhone knows to flip the display from portrait to landscape.

Proximity sensors knows to turn off the display when you lift the iPhone to your ear. They work by emitting tiny bursts of infrared radiation, which hit an object and are reflected back. If the object is close, then the reflected radiation is more intense.

(Photos of proximity sensor by Hyderabaduser, Wikimedia Commons)

For the iPhone to determine its place relative to everything else, it relies on GPS (Global Positioning System) – a globe-spanning system of satellites. GPS was developed by the U.S. Naval Research Laboratory in the 1960s and 1970s.

Today, every iPhone has a dedicated GPS chip that trilaterates with Wi-Fi signals and cell towers. Google Maps uses this technology.

STRONG-ARMed

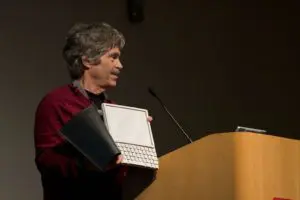

In 1977, Alan Kay and his colleague Adele Goldberg developed the concept of a Dynabook, which was powerful, dynamic, and very easy to use.

The Dynabook, which looks like an iPad with a hard keyboard, was one of the first mobile-computer concepts ever put forward, and perhaps the most influential. It has since earned the dubious distinction of being the most famous computer that never got built.

(Alan Kay and the prototype of Dynabook, Photo by Marcin Wichary, Wikimedia Commons)

Kay is one of the fathers of personal computing. He once said that the Mac was the “first computer worth criticizing.” Kay holds that the Dynabook still has not been built. The smartphone, shaped in part by marketing departments, simply gives people more of what they already wanted, such as news and social media.

Because Moore’s law has been in effect for fifty years now, computer chips (which include transistors) have gotten dramatically smaller, more powerful, and less energy intensive. Moore’ law may be slowing down. But depending upon progress in areas such as quantum computing, there could still be much room for improvement before any limit is reached.

The first iPhone processor had 137,500,000 transistors. But the iPhone 7, released 9 years after the first iPhone, has 3.3 billion transistors, about 240 times more. Whatever app you just downloaded has more computing power than the first mission to the moon.

The other part of the story is a breakthrough low-power processor, without which the iPhone battery would drain far too quickly. The ARM processor is the most popular ever. 95 billion have been sold, with 15 billion shipped in 2015 alone. ARM chips are in everything: smartphones, computers, wristwatches, cars, coffeemakers, etc.

ARM stands for Acorn RISC Machine. RISC isreduced instruction set computing. Berkeley researchers developed RISC after they observed that most computer programs weren’t using the majority of a given processor’s instruction set.

(Acorn RISC PC ARM-710 CPU, Photo by Flibble, Wikimedia Commons)

Sophie Wilson and Steve Furber were star engineers for Acorn, a company founded by Herman Hauser after he met Wilson and saw some of her designs for various machines. Wilson visited a group in Phoenix that designed the processor for Acorn’s computer. Wilson was surprised to find “two senior engineers and a bunch of school kids.” Wilson and Furber realized that they could develop their own RISC CPU for Acorn. Merchant quotes Wilson:

“It required some luck and happenstance, the papers being published close in time to when we were visiting Phoenix. It also required Herman. Herman gave us two things that Intel and Motorola didn’t give their staff: He gave us no resources and no people. So we had to build a microprocessor the simplest possible way, and that was probably the reason that we were successful.”

Also, Acorn wanted to simplify their designs. So they developed SoC, or System on a Chip, which integrates all the components of a computer on to one chip. Acorn didn’t realize how important SoC would become.

Merchant describes the evolution of apps for the iPhone:

The first iPhone shipped with sixteen apps, two of which were made in collaboration with Google. The four anchor apps were laid out on the bottom: Phone, Mail, Safari, and iPod. On the home screen, you had Text, Calendar, Photos, Camera, YouTube, Stocks, Google Maps, Weather, Clock, Calculator, Notes, and Settings. There weren’t any more apps available for download and users couldn’t delete or even rearrange the apps. The first iPhone was a closed, static device.

Then Jobs, continuously pressured by software developers, decided that they would allow web apps. Brett Bilbrey, who was senior manager of Apple’s Advanced Technology Group until 2013, observed:

“The thing with Steve was that nine times out of ten, he was brilliant, but one of those times he had a brain fart, and it was like, ‘Who’s going to tell him he’s wrong?'”

If mounting pressure from developers and Apple’s own executives wasn’t enough, there was the fact that the iPhone sold poorly for the first 3 to 6 months. Scott Forstall finally convinced Jobs to allow apps. Merchant:

…This was arguably the most important decision Apple made in the iPhone’s post-launch era. And it was made because developers, hackers, engineers, and insiders pushed and pushed. It was an anti-executive decision. And there’s a recent precedent – Apple succeeds when it opens up, even a little.

The iPod took off when Apple made iTunes for Windows. Before that, the iPod hardly sold.

If an app was approved for the iPhone and if it was monetized, then Apple would take a 30 percent cut.

…And that was when the smartphone era entered the mainstream. That’s when the iPhone discovered that its killer app wasn’t the phone, but a store for more apps.

(iPhone apps and app store, Photo by Michael Damkier)

There are over 2 million apps in the App Store today. As of 2014, six years after the launch of the App Store, over 627,000 jobs have been created based on iOS and U.S.-based developers have earned more than $8 billion.

On the other hand, the majority of the app money is going to games and streaming media– services designed to be as addictive as possible. This is part of Kay’s point. We have the technology for a Dynabook. We have the technology to help us engage in productive and creative pursuits. But consumerism– channeled by marketing departments– has turned mobile computers into consumption devices.

ENTER THE iPHONE

In the mid-2000s, top engineers at Apple were regularly disappearing mysteriously. They ended up doing top secret work on what would become the iPhone. And they had time for little else. Everyone on the team was brilliant. The mission was impossible. The deadlines were impossible. Quite a few marriages were ruined.

The iPod didn’t sell its first two years. Finally Apple introduced iTunes software so that people could manage their iPods from computers running Windows, rather than just from Apple computers. After Apple’s success with iPod hardware and iTunes software, people both inside and outside Apple were wondering what else the company could do. Many ideas were mentioned, including a camera, a phone, and an electric car.

One thing everyone at Apple agreed on was that, before the iPhone, cell phones were “terrible.” Merchant:

“Apple is best when it’s fixing things that people hate,” Greg Christie tells me. Before the iPod, nobody could figure out how to use a digital music player; as Napster boomed, people took to carting around skip-happy portable CD players loaded with burned albums. And before the Apple II, computers were considered too complex and unwieldy for the lay person.

It took time to convince Steve Jobs that Apple should do a phone. Mike Bell, who’d worked at Apple for fifteen years and at Motorola’s wireless division before that, was one of those who helped convince Jobs. Bell was sure that computers, music players, and cell phones would converge. Eventually Jobs agreed.

Jobs contacted Bas Ording and Imran Chaudhri of the touchscreen-tablet project. Jobs said, “We’re gonna do a phone.” The engineers got to work. Many features of the iPhone that we now take for granted were the result of persistent tinkering.

(Photo by Sergey Gavrilichev)

But despite compelling multitouch demos, the team still lacked a coherent concept. Jobs gave the team a 2-week ultimatum in February, 2005. The team came through. Jobs was pleased. This meant a great deal more work, of course. Then Jobs did a presentation to the Top 100 at Apple. Another huge success.

Soon there were two separate approaches, code-named P1 and P2. P1 was the iPod phone. P2 was an evolving hybrid of multitouch technology and Mac software. Tony Fadell ran P1, while Scott Forstall managed P2. It’s not clear whether it was a good idea to have these two teams compete, given how much political conflict later erupted on the iPhone project.

The iPhone’s code name was Purple. Forstall’s group was viewed as the underdog by many, since Fadell had been responsible for many millions of iPod sales. But soon the touchscreen approach won out.

The next battle was over the operating system. Fadell’s group wanted to do it like the iPod, which used a rudimentary operating system. But Forstall’s team wanted to take Apple’s main operating system, OS X, and shrink it down. One top engineer, Richard Williamson, said:

“There were some epic battles, philosophical battles about trying to decide what to do.”

Once basic scrolling operations were demonstrated on the stripped-down OS X, the decision was essentially made: OS X.

(Photo by Mohamed Soliman)

HEY, SIRI

Merchant:

Siri is really a constellation of features – speech-recognition software, a natural-language user interface,and an artificially intelligent personal assistant. When you ask Siri a question, here’s what happens: Your voice is digitized and trasmitted to an Apple server in the Cloud while a local voice recognizer scans it right on your iPhone. Speech-recognition software translates your speech into text. Natural-language processing parses it. Siri consults what tech writer Stephen Levy calls the iBrain – around 200 megabytes of data about your preferences, the way you speak, and other details. If your question can be answered by the phone itself (“Would you set my alarm for eight a.m.?”), the Cloud request is canceled. If Siri needs to pull data from the web (“Is it going to rain tomorrow?”), to the Cloud it goes, and the request is analyzed by another array of models and tools.

The history of artificial intelligence is quite fascinating. I wrote about that and related topics here:https://boolefund.com/future-of-the-mind/

(Photo by Christian Lagereek)

One recent divide in AI is whether the computer should learn through symbolic reasoning or through repeated exposure to extensive data sets. When it comes to perception – computer vision, computer speech, pattern recognition – the data-driven approach works best. Machine learning is another term for this type of approach.

One problem with machine-learned models, however, is that a human can have a hard time understanding what the computer actually “knows.”

Consider chess. At some point, computing power will be great enough that a computer will be able to “solve” the game of chess by figuring out every single possible chain of moves. Perhaps white can always win. Would we say that such a supercomputer is “intelligent”? A program like this is similar to an extremely high-powered calculator. We don’t say that calculators are “intelligent” just because they can quickly and accurately compute using astronomical numbers.

Part of the problem is that we still have much to learn about how the human brain works.

DESIGNED IN CALIFORNIA, MADE IN CHINA

Merchant writes about his visit to China:

The vast majority of plants that produce the iPhone’s component parts and carry out the devices’s final assembly are based here, in the People’s Republic, where low labor costs and a massive, highly skilled workforce have made the nation an ideal place to manufacture iPhones (and just about every other gadget). The country’s vast, unprecedented production capabilities – the U.S. Bureau of Labor Statistics estimated that as of 2009 there were ninety-nine million factory workers in China – has helped the nation become the world’s largest economy. And since the first iPhone shipped, the company doing the lion’s share of the manufacturing is the Taiwanese Hon Hai Precision Industry Company, Ltd., better known by its trade name, Foxconn.

Foxconn is the single largest employer on mainland China; there are 1.3 million people on its payroll. Worldwide, among corporations, only Walmart and McDonald’s employ more. As of 2016, that was more than twice as many people working for the five most valuable tech companies in the United States– Apple (66,000), Alphabet (70,000), Amazon (270,000), Microsoft (64,000), and Facebook (16,000)– combined.

(Wikimedia Commons)

Foxconn was in the news when it was learned that many of its workers were committing suicide.

The epidemic caused a media sensation– suicides and sweatshop conditions in the House of iPhone. Suicide notes and survivors told of immense stress, long workdays, and harsh managers who were prone to humiliate workers for mistakes; of unfair fines and unkept promises of benefits.

Foxconn CEO Terry Gou installed large nets outside many of the buildings to catch falling bodies. The company also hired counselors, and made workers sign no-suicide pledges. Steve Jobs remarked that the suicide rates at Foxconn were within the national averages and were lower than at many U.S. universities. Perhaps not the best thing to say, although technically accurate.

Merchant continues:

Shenzhen was the first SEZ, or special economic zone, that China opened to foreign companies, beginning in 1980. At that time, it was a fishing village that was home to some twenty-five thousand people. In one of the most remarkable urban transformations in history, today, Shenzhen is China’s third-largest city, home to towering skyscrapers, millions of residents, and, of course, sprawling factories. And it pulled off the feat in part by becoming the world’s gadget factory. An estimated 90 percent of the world’s consumer electronics pass through Shenzhen.

Many, if not most, Chinese people believe strongly in hard work and constant improvement. They are driven in part by the memory or knowledge of how poor most Chinese were in the recent past. They fear that if they don’t work hard and keep improving, they’ll become very poor again.

Merchant spoke with as many people as he could. But he’s careful to note that he didn’t get a truly representative sample, which would have required a massive canvassing effort and interviewing thousands of employees.

Merchant learned that most workers viewed the pace of work as relentless. They agreed that most workers only last a year.

Also, many thought that the management culture was cruel. Managers often used public condemnation if a mistake was made or if quota wasn’t met. Workers were frequently expected to stay silent. Even asking to use the restroom was often met with a rebuke.

(Protest in 2011 outside new Apple Store in Hong Kong, Photo by SACOM, Wikimedia Commons)

Many Chinese workers would like to work for Huawei, a Chinese smartphone competitor. When one worker went to the recruiting office, they told him Huawei was full. But it wasn’t. He feels he was tricked into working for Foxconn. He suspects Foxconn has a deal with the recruiter.

Furthermore, Foxconn often didn’t keep promises. They offered free housing, but then charged exorbitant prices for electricity and water. Also, bonuses were often delayed. Moreover, many workers were told they would get overtime pay, but then received regular pay. Many workers were promised a raise but never got one.

SELLPHONE

Merchant writes:

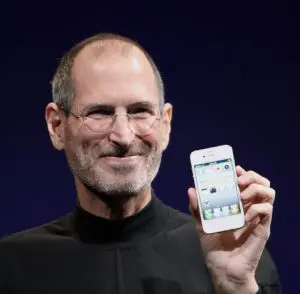

…Simply put, the iPhone would not be what it is today were it not for Apple’s extraordinary marketing and retail strategies. It is in a league of its own in creating want, fostering demand, and broadcasting technological cool. By the time the iPhone was actually announced in 2007, speculation and rumor over the device had reached a fever pitch, generating a hype that few to no marketing departments are capable of ginning up.

Of course, the product itself is impressive, and has to be for these marketing tactics to work so well.

(2010 Photo by Matthew Yohe)

In the late 1990s or early 2000s, Jobs began to use secrecy much more than before. The “magical” aspect of a new Apple product is heightened by the use of secrecy.

At the same time, Apple uses scarcity. After launching a new iPhone, Apple deliberately keeps the supplies artificially low for at least a few weeks. In general, if something humans want is scarce, they tend to want it significantly more. A well-known psychological fact that Apple carefully exploits.

THE BLACK MARKET

Merchant:

Huaqiangbei is a bustling downtown bazaar: crowded streets, neon lights, sidewalk vendors, and chain smokers. My fixer Wang and I wander into SEG Electronics Plaza, a series of gadget markets surrounding a towering ten-story Best-Buy-on-acid on Huaqiangbei Road. Drones whir, high-end gaming consoles flash, and customers inspect cases of chips. Someone bumbles by on a Hoverboard. A couple shops over, a clustor of kiosks hock knockoff smartphones at deep discount. One saleswoman tries to sell me an iPhone 6 that’s running Google’s Android operating system. Another pitches a shiny Huawei phone for about twenty dollars.

(Huaqiangbei electronics market, Photo by Lzf)

Merchant, a bit later:

In downtown Shenzhen, a couple blocks from the famed electronics market, this smoky four-story building the size of a suburban minimall is an emporium for refurbished, reused, and black-market iPhones. You have to see it to believe it. I’ve never seen so many iPhones in one place – not at an Apple store, not raised by the crowd at a rock concert, not at CES. This is just piles and piles of iPhones of every color, model, and stripe.

Some booths are tricked-out repair stalls where young men and women examine iPhones with magnifying glasses and disassemble them with an array of tiny tools. There are entire stalls filled with what must be thousands of tiny little camera lenses. Others advertise custom casings… Another table has a huge pile of silver bitten-Apple logos that a man is separating and meting out. And it’s packed full of shoppers, buyers, repair people, all talking and smoking and poring over iPhone paraphernalia.

Some of the tables don’t sell iPhones to individuals but to wholesale buyers. Counterfeits are one thing. But these iPhones are virtually indistinguishable from the real thing.

Obvious counterfeits don’t last long:

In 2015, China shut down a counterfeit iPhone factory in Shenzhen, believed to have made some forty-one thousand phones out of secondhand parts. And you may have read headlines about counterfeit iPhone rings being busted up in the United States too, from time to time. In 2016, eleven thousand counterfeit iPhones and Samsung phones worth an estimated eight million dollars were seized in an NYPD raid. In 2013, border security agents seized two hundred and fifty thousand dollars’ worth of counterfeit iPhones from a Miami shop owner who says he sourced his parts legitimately.

But counterfeits are generally easy to spot because they won’t be compatible with specific software or they’ll have obvious glitches. So any iPhone that works like an iPhone is an iPhone, notes Merchant. Those iPhones available on the black market that have been made with iPhone parts are, for all practical purposes, iPhones, right?

Apple discourages customers from getting inside their phones. It uses proprietary screws. It issues takedown requests on grounds of copyright to blogs that post repair manuals. It voids warranties if anyone tries to repair their own phone or hires a thiry-party to do so. Apple does not sell any replacement parts for iPhones; customers have to pay Apple to do it, often at high prices.

THE ONE DEVICE

Merchant:

There’s a reason that all those software engineers had migrated to the interface designers’ home base – the iPhone was built on intense collaboration between the two camps. Designers could pop over to an engineer to see if a new idea was workable. The engineer could tell them which elements needed to be adjusted. It was unusual, even for Apple, for teams to be so tightly integrated.

“One of the important things to note about the iPhone team was there was a spirit of ‘We’re all in this together,'” Richard Williamson says. “There was a ton of collaboration across the whole stack, all the way from Bas Ording doing innovative UI mock-ups down to the OS team with John Wright doing modifications to the kernel. And we could do this because we were all actually in this lockdown area. It was maybe just forty people at the max, but we had this hub right above Jony Ive’s design studio. In Infinite Loop Two, you had to have a second access key to get in there. We pretty much lived there for a couple of years.”

(Photo by Rafal Olechowski)

The team was composed of brilliant engineers across the board. They worked long hours, and constantly collaborated. They would sit down together and figure it out as they went. Many ideas that would have been delayed, or even dismissed, under most circumstances became workable in short order.

Williamson credits Steve Jobs with creating essentially a start-up inside a large company. Put the best engineers together on the most promising project, insulate them from everyone else, push them to meet very high expectations, and give them unlimited resources.

The team was very focused on making the iPhone easy and intuitive to use. They thought carefully about how people manipulate physical things in their daily life. They wanted these movements to give users clues about how to use the iPhone. It goes without saying there would never be a user’s manual – that would be a failure by the team.

Then there was hardware. Merchant spoke with Tony Fadell:

“We had to get all kinds of experts involved,” he says. “third-party suppliers to help. We had to basically make a touchscreen company.” Apple hired dozens of people to execute the multitouch hardware alone. “The team itself was forty, fifty people just to do touch,” Fadell says. The touch sensors they needed to manufacture were not widely available yet. TPK, the small Taiwanese firm they found to mass-manufacture them, would boom into a multibillion-dollar company, largely on the strength of that one contract. And that was just touch– they were going to need Wi-Fi modules, multiple sensors, a tailor-made CPU, a suitable screen, and more.

Tony Fadell called the project “a moon shot… like the Apollo project.”

(Apollo program insignia, by NASA, Wikimedia Commons)

There was never enough people and never enough time. People worked seriously hard. Vacations and holidays were out of the question. There were quite a few divorces.

Merchant spoke with Evan Doll, who was on the iPhone team:

The ENRI team created a batch of interaction demos on an experimental touchscreen rig– right before Apple needed a successor to the iPod. FingerWorks came to market with consumer-friendly multitouch– just in time for the ENRI crew to use it as a foundation. Computer chips had to shrink. “So much of it is timing and getting lucky,” Doll says. “Maybe the ARM chips that powered the iPhone had been in development for a very long time, and maybe fortuitously had reached a happy place in terms of their capabilities. The stars aligned.” They also aligned with lithium-ion battery technology, and with the compacting of cameras. With the accretion of China’s skilled labor force, and the surfeit of cheaper metals around the world. The list goes on. “It’s not just a question of waking up one morning in 2006 and deciding that you’re doing to build the iPhone; it’s a matter of making these nonintuitive investments and failed products and crazy experimentation– and being able to operate on this huge timescale,” Doll says. “Most companies aren’t able to do that.Apple almost wasn’t able to do that.”

While Steve Jobs will always be associated with the iPhone, it’s clear that a great many people contributed to its creation.

Proving the lone-inventor myth inadequate does not diminish Jobs’s role as curator, editor, bar-setter– it elevates the role of everyone else to show he was not alone in making it possible. I hope my jaunt into the heart of the iPhone has helped demonstrate that the one device is the work of countless inventors and factory workers, miners and recyclers, brilliant thinkers and child laborers, and revolutionary designers and cunning engineers. Of long-evolving technologies, of collaborative, incremental work, of fledgling startups and massive public-research institutions.

BOOLE MICROCAP FUND

An equal weighted group of micro caps generally far outperforms an equal weighted (or cap-weighted) group of larger stocks over time. See the historical chart here: https://boolefund.com/best-performers-microcap-stocks/

This outperformance increases significantly by focusing on cheap micro caps. Performance can be further boosted by isolating cheap microcap companies that show improving fundamentals. We rank microcap stocks based on these and similar criteria.

There are roughly 10-20 positions in the portfolio. The size of each position is determined by its rank. Typically the largest position is 15-20% (at cost), while the average position is 8-10% (at cost). Positions are held for 3 to 5 years unless a stock approachesintrinsic value sooner or an error has been discovered.

The mission of the Boole Fund is to outperform the S&P 500 Index by at least 5% per year (net of fees) over 5-year periods. We also aim to outpace the Russell Microcap Index by at least 2% per year (net). The Boole Fund has low fees.

If you are interested in finding out more, please e-mail me or leave a comment.

My e-mail: jb@boolefund.com

Disclosures: Past performance is not a guarantee or a reliable indicator of future results. All investments contain risk and may lose value. This material is distributed for informational purposes only. Forecasts, estimates, and certain information contained herein should not be considered as investment advice or a recommendation of any particular security, strategy or investment product. Information contained herein has been obtained from sources believed to be reliable, but not guaranteed.No part of this article may be reproduced in any form, or referred to in any other publication, without express written permission of Boole Capital, LLC.